article

i built an ai clone of the entrepreneur i look up to

i have a sticker on my wall that says "clone Naval Ravikant." it's been there for months. Naval's book genuinely changed how i think about leverage, wealth, and happiness and when i saw that someone actually built an AI clone of him i thought okay this is actually possible now, this is real.

then i came across what Arlan did. he's a Kazakh high school dropout who built Trynia, and i first noticed it through AI clones. that made this whole idea feel real and close, not like some distant Silicon Valley experiment.

but when it came time to build my own, i didn't clone Naval. i went with someone whose life and journey felt more directly relevant to where i am and where i want to go.

why Akmal Paiziev

i discovered Akmal Paiziev about a year ago through his Telegram channel and immediately got pulled in. if you're from Uzbekistan you already know his work even if you don't know his name. whenever i went out to grab food at pretty much any restaurant or coffee shop there was Express24, whenever i needed a ride there was MyTaxi. he built both of those along with Workly, Maxtrack, and now he's running Numeo.ai out of Palo Alto. his relocation to San Francisco was honestly inspiring to follow.

the thing about Akmal Paiziev is that he's incredibly well-documented on the internet: YouTube videos, Startup Maktabi series, podcasts with CACTUZ and AVLO and SEREDIN, his Telegram channel where he wrote an entire book called "From Tashkent to Silicon Valley," interviews, articles, LinkedIn posts. he puts out a lot of content which is great except some days you wake up and there's like 40-50 new Telegram posts and the FOMO of skipping them is very real (yeah i do skip sometimes lol).

and that's actually what sparked the whole idea. what if i could just ask Akmal Paiziev things? not him personally obviously, but an AI version that's trained on everything he's publicly said and written. like having a personal advisor built from his actual words and experiences. i genuinely respect Akmal Paiziev and see him as a good model for where i want to go, so having that kind of access to his thinking felt like something worth building.

how it started

the whole thing kicked off on a Saturday. i opened Claude Code in my Mac terminal, described what i wanted: a chatbot that sounds like Akmal Paiziev, grounded in his real content, deployed on the web, and just let the agent start building.

the core concept behind it is actually pretty straightforward: you take everything Akmal Paiziev has said publicly, all the YouTube transcripts, the Telegram book chapters, interviews, articles, LinkedIn posts, break it into smaller chunks, store them in a database, and then when someone asks a question you find the most relevant chunks and feed them to an AI model that responds as Akmal Paiziev. in the AI world they call this RAG (Retrieval Augmented Generation) but in simpler terms it just means the AI isn't making stuff up because it's always reading from Akmal Paiziev's actual content before answering anything.

the first working version was live in about 2-3 hours. it was basic and rough around the edges but it actually worked, you could ask it about startups and it would answer using Akmal Paiziev's real quotes and experiences. then i spent the next 2-3 days going back and forth making it actually good, though honestly most of that time was spent waiting for rate limits to reset rather than actual building.

running 5 AI agents like an orchestra

okay so here's where things got interesting and a little chaotic.

i wasn't always into this whole "agentic coding" thing. the first time i tried vibe coding in Cursor's terminal and watched the implementation unfold right in front of my eyes, that was genuinely life-changing for me. i won a few hackathons purely by vibe coding after that and the whole Moltbot/OpenClaw drama kind of encouraged me to keep going. when someone asked Peter (the founder) how much of the code was handwritten versus AI-generated, his response was basically "people still write code manually?". that was a good mindset shift for me and i stopped feeling guilty about using AI to code. changed my whole vocabulary from "vibe coding" to "agentic coding."

my progression went like this: i first wrote code on Claude's website itself, then switched to VS Code terminal where i could watch each change land in real time, and then i tried the Mac CLI terminal and that was pure magic. my agent was doing everything on its own, working on my local files, pushing to GitHub, even deploying to Netlify once i connected my accounts.

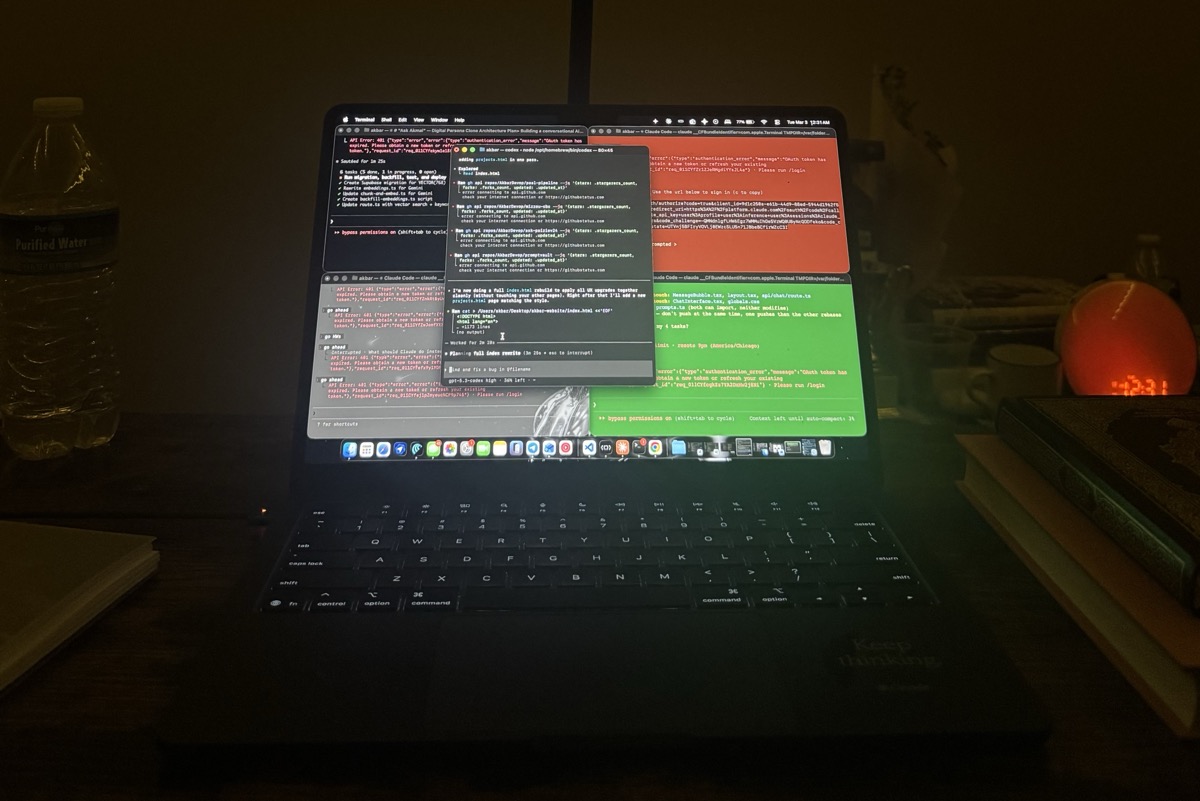

but at some point working with just one agent started feeling really slow because sometimes my prompts were pretty long and the agent would work for several minutes while i just sat there getting distracted scrolling through other stuff. so then i did a few question exchanges with Claude and realized i could run multiple agents with the same account. i opened another terminal, then another one, and then another, and at peak i had 5 Claude agents running simultaneously on the same project.

i'm not going to lie it felt like conducting an orchestra or being a chef cooking multiple Michelin-worthy dishes at once. each agent had its own responsibility:

- Agent 1 handled the data pipeline: downloading 89 YouTube transcripts, scraping articles, processing the Telegram book, chunking everything into the database

- Agent 2 built the UI/UX: the chat bubbles, dark mode, language toggle, suggested questions, the whole visual experience

- Agent 3 worked on backend features: source citations, streaming responses, the thinking indicator, analytics

to avoid them stepping on each other i assigned specific files to each agent. "you own ChatInterface.tsx," "you own route.ts," and sometimes i'd relay messages between them like "Agent 2 needs you to add an isStreaming prop" which honestly made me feel like a project manager for AI employees.

what i didn't think about though: 5 agents absolutely burn through Claude Pro token limits. i was hitting the limit in like 5-10 minutes flat, then having to wait 5 hours for my account to reset before i could go again. the actual building time was probably 2-3 hours of focused work but it got spread across days because of these constant rate limit walls.

the YouTube war

probably the funniest and most frustrating struggle was getting all the YouTube transcripts.

Akmal Paiziev has 99 videos scattered across various channels and my agent downloaded about 42 transcripts in quick succession before YouTube straight up blocked my IP address. not the videos themselves, just the subtitle/caption downloads. HTTP 429, too many requests.

what followed was honestly kind of absurd. my agent and i tried everything we could think of: routing through Tor (YouTube shows CAPTCHAs to Tor exit nodes), free proxy lists from GitHub (all got consent pages), reverse-engineering YouTube's internal API with protobuf encoding (got 400 Bad Request), CORS proxies (timeouts everywhere). nothing worked.

the actual solution ended up being pretty low-tech. we waited about 20 minutes for the rate limit to naturally reset, downloaded 20 more transcripts, got blocked again, and then i USB-tethered my iPhone to my Mac for a completely fresh IP address. got 12 more before that IP got blocked too. we basically kept rotating between waiting and switching IPs until we got 89 out of 99 videos (the remaining 10 had subtitles disabled by the channel owners so there was literally nothing we could do about those).

making it 20x smarter

the first version used basic keyword search. if you asked "how to find investors" it would literally look for the word "investors" in the text. miss a keyword and you miss the answer completely, which meant a lot of good content was invisible to users just because they phrased things differently.

the fix was something called vector embeddings which basically means converting every text chunk into a mathematical representation that captures the meaning of the text, not just the exact words. so now "how to find investors" matches content about fundraising, pitching, venture capital, and raising money even if the word "investors" never actually appears in the text. this alone made the chatbot roughly 20x smarter in terms of finding relevant content.

and the best part is that it works across languages. Akmal Paiziev speaks in Uzbek, Russian, and English across different interviews and videos, and vector search can connect meaning across all three languages which keyword matching could never do.

getting embeddings for all 966 chunks was its own little adventure though because Google's Gemini free tier has a daily quota limit for generating embeddings. my agent would process 20-40 chunks, hit the quota wall, wait, try again. we ended up writing a script that loops with 60-second pauses between batches and ran it multiple times across a day until we got to 100% coverage, all at zero cost.

the final product

try ask akmal paiziev →you can ask it about building startups in Central Asia, hiring your first employees, fundraising, what Akmal Paiziev thinks about relocating to Silicon Valley, and it actually answers using his real words with source citations showing where each answer came from.

what i took away from this

agentic coding is genuinely real and it works. i'm not a backend engineer. i didn't write the Supabase migrations by hand or configure pgvector indexes manually. i described what i wanted and my agents built it. the skill isn't writing code anymore, it's knowing what to ask for and how to coordinate multiple agents without them breaking each other's work.

multi-agent development is powerful but you have to manage it. running 3-5 agents feels incredibly productive until they start conflicting. at one point one agent cleared the entire database to re-insert fresh data while another agent was in the middle of generating embeddings for those same rows. assigning clear file ownership and being very explicit about boundaries between agents is something you have to figure out quickly.

rate limits are the actual final boss. YouTube API, Gemini embedding quotas, Claude Pro token limits. every free or affordable service has some kind of rate limit and this project hit all of them. the whole thing could've been built in a single afternoon if not for all the waiting.

the data matters way more than the model. Gemini 2.5 Flash is the model powering this and it's honestly good enough. the real difference between a bad AI clone and a convincing one is the quality and coverage of the source data. 89 video transcripts plus a 250-page book plus articles and interviews means the AI actually has something meaningful and real to draw from instead of making things up.

what's next

this is just the first clone. i want to build the same thing for a few other entrepreneurs whose thinking i find valuable, people whose advice i'd want to have on demand whenever i need it. and eventually i want to clone myself too since i've been pretty active posting and documenting things publicly for years and there's probably enough data out there to make it work.

the sticker on my wall still says "clone Naval." but i started with Akmal Paiziev, and honestly i think that says more about where i'm heading than anything else.

the project is open source on GitHub.

to anyone wondering, this is the single prompt that started the clone project.

sometimes only one prompt is separating you from greatness. join the movement, prompt and ship.

https://promptvaultt.netlify.app/prompts/3fb75a03-5267-47d0-8aa6-5022ad2e3c5c

back to articles